Every email marketer should be A/B testing at every opportunity. Too often we fall into the trap of assuming we know exactly what works best for our audience. But the fact is, that’s a factless assumption. The only way to truly know the most effective messaging is to back it up with data by conducting A/B tests.

Before we explore some of the most common tests, there are two very important rules that must be followed—that is, if you want to A/B test like a pro.

Rule #1: One Variable

Only one variable should differ between your two test groups. That means if you’re testing two subject lines against each other, everything else should remain the same: your email creatives, the day and time that you send both messages, and yes, even your preheaders. By keeping everything else identical, you’ll be able to pinpoint any differences in engagement to the one variable you adjusted.

Rule #2: Sample Size

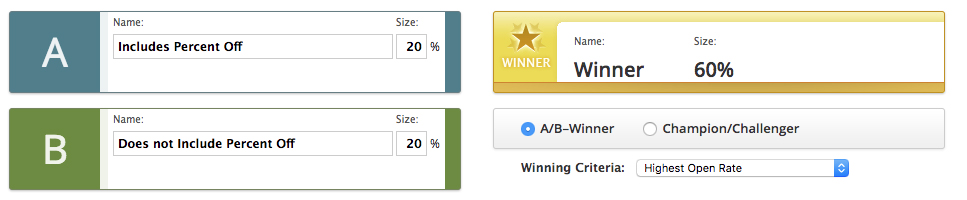

Make sure your sample sizes in each test group are large enough to produce significant results (more on this later). One way to increase your odds of achieving meaningful results is to send one version of a test to 50% of your subscribers, and another version to the other 50%. Another way to set up an A/B test is to send just a portion of your subscribers the test variations, then send the remainder of your subscribers the winning content. A good starting point may be a 20/20/60 split: 20% receives version A, 20% receives version B, and at a later time 60% receives whichever version was the winner. Depending on the size your database, you may be able to decrease the initial testing split and still produce significant results.

With those two major rules summed up, let’s move on to a few of the most common A/B tests.

Subject Line

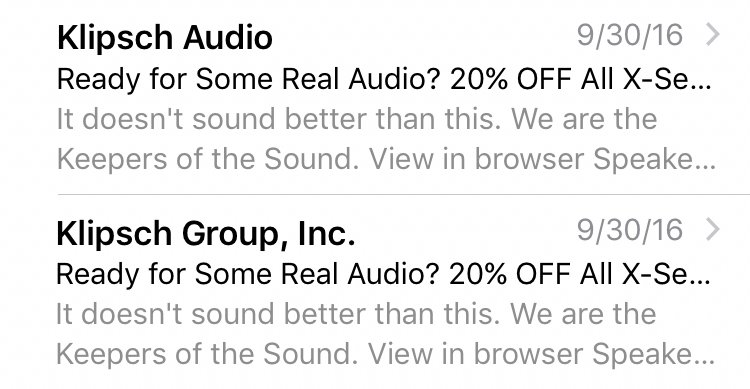

One of the lowest of the low hanging fruit, and often a great place to start, is your subject line. You may be surprised at the difference in open rates produced by whether or not you include the percent off for an email advertising a sale. The tests here are limitless. Change up your leading words, experiment with variations in capitalization, use active and passive verbs—you could even throw some emojis in there to see if they have an impact.

From Name

If you have an iPhone, go check out your Mail app real quickly. From your inbox preview, what font appears the largest? You may not have noticed before, but it’s actually the From Name. If you’ve been using “Inc.” in your From Name, it may be time to try dropping the formality to see if open rates increase. On the other hand, perhaps your organization could establish better rapport by including “Inc.” You won’t know for sure until you test.

Content/Creative

Similar to how you would test a landing page, you can test the content within your email message. What’s more effective at driving click engagement—lifestyle imagery or product imagery? A CTA button that says “Shop Now” or “Save Now”? A static HERO image or a HERO image that’s an animated GIF? A navigation bar located along the top of your template or along the bottom of your template? The answers may surprise you. Better get testing!

And those are just scratching the surface. A/B tests can be applied to workflows and automations, for example to test the cadence at which messages are sent. You can test how sending on a Monday versus Wednesday impacts subscriber engagement. What if open rate increases on Monday, but CTR increases on Wednesday? This is important information to know! Don’t be intimated by the possibilities—there’s no better time to start A/B testing than now.

Rule #2 Extended: Statistical Significance

One last final note: the other half of rule #2. If you’re going to A/B test your emails like a pro, then you’re going to need to check for statistical significance. Just because one open rate looks higher than another, there may be more than what meets the eye. For example, the number of emails delivered in each test group can have a big impact on whether or not the perceived difference, say 22% vs 24%, is actually meaningful. Achieving statistical significance demonstrates that if the same test was repeated over and over, we can confidently say that it would produce the same outcome a certain percentage of the time. There are a few online resources for calculating statistical significance, but to learn more on which specific metrics you should be testing, or if you need help selecting an email service provider that has A/B testing functionality, connect with a Wpromote strategist below.

Commence A/B testing:

vs

Responses